Israel-Hamas and the AI mysteries

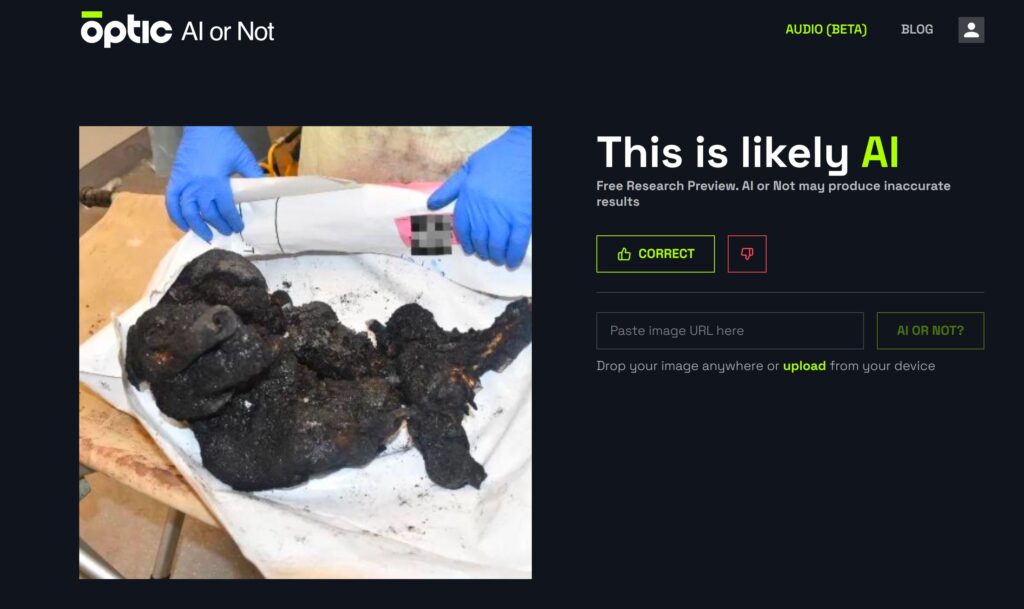

Ben Shapiro posted on X a “pictorial proof of dead jewish babies”. Someone tested the picture on aiornot.com and said it’s AI-generated: I went on testing, and here’s the outcome

We all know that every conflict is also made of propaganda, social networks and their relative lack of editorial control help it quite a lot, and AI can be a plus.

On one side, you can falsify things with good quality AI-generated pictures. On the other hand, you can raise suspicion on any true picture.

I made a test on the latest debate, concerning a photograph posted by Ben Shapiro on X, as a proof of the horrific acts committed by Hamas also on children.

This proof was said to be fake by some other people on X. AI-generated picture. They used a free website to run the test: aiornot.com.

So, I did the same. I downloaded the picture posted on X and I run a test on the same website. The result is nearly the same. I say “nearly” because in the screenshot posted by that guy AI or Not said “This picture is generated by AI”, while in my case the result is the same but the sentence is a bit more careful.

Is this tool working correctly? I test it with some pictures I have on my pc (and I know they’re true) and it confirms they’re “human”. Then I go on an AI picture-generating website, I create a pic, download it, run the test and it’s correctly detected as AI. Again, the sentence says “is likely AI”.

So, let’s say this website does its job. Seems that this photo is fake. Then I go back on X, in order to see if anyone replied to this accusation. Someone wrote this:

There was actually a deliberate pixelization of a logo, in the photo. But does it really influence the test?

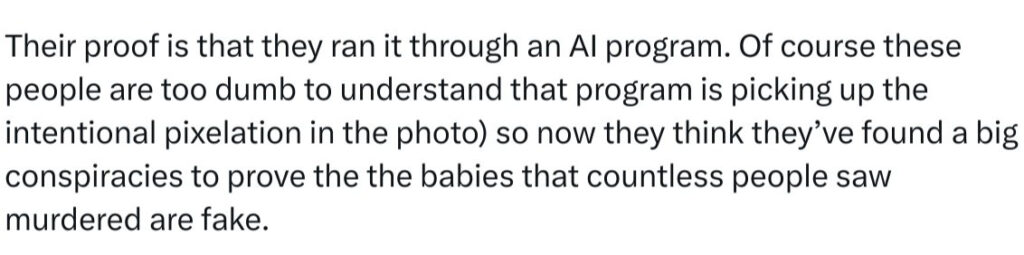

I searched any news photo in Google, I downloaded it, it was a soldier kicking someone on the ground, in Africa. I run a test on the website, it says it’s OK, it a “human” photo. A real one, not AI.

Then I add on the same photo a pixelization, to cover part of the boot. I save it and run a new test. Still “human”.

So, I guess the problem doesn’t lie in the pixels.

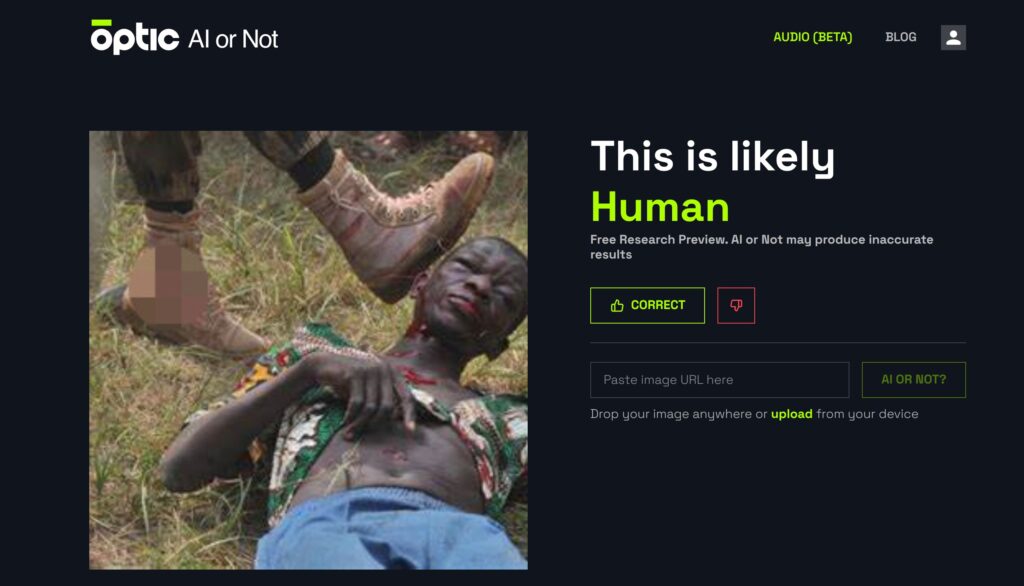

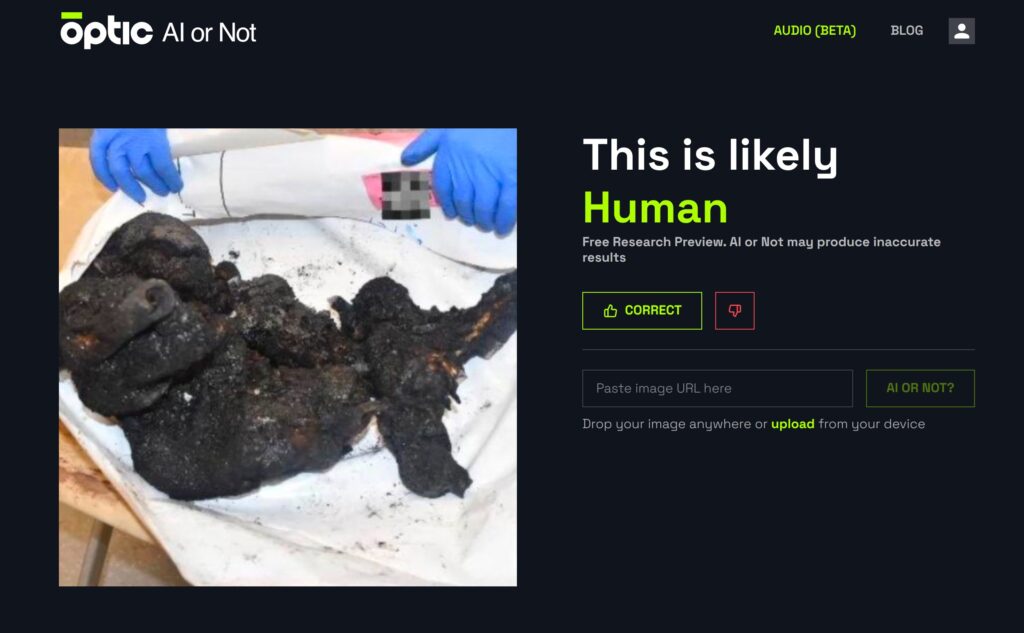

The story goes on, on X. Someone posted the following picture, saying this was the original one. I download it and I run a test:

Here I’m totally astonished: it actually seems that the photo posted by Shapiro is a fake, but why and how? The non-confirmed news of these days were concerning beheaded children, not the general fact of children being between the victims of the massacres. And Shapiro posted it as proof of the killings, not of any beheading. Why should he falsify a photograph, with all the confirmed material available around?

Furthermore: if I take a real photo and I can add some pixels and it’s still recognized as “human” it means that the software doesn’t check if the picture is manipulated or not, it just checks – as its name clearly says – if it was created by AI. So this one with the puppet could be just a Photoshop-modified one, which still remains “human” for the testing website. By the way, I remark that the puppet doesn’t seem to have any shadow, while the doctor’s fingers do, even if the light is quite vertical so I can’t really be sure. But that burnt little body drops a shadow which is compatible with the doctor’s fingers shadow. Still, when the shadows are that short and the resolution quite low it’s difficult to prove any manipulation. Also on the burnt body photo there could be some suspicious elements*, by the way.

But then, and still: why does it say that the first posted picture of the child is AI generated, and it’s never wrong with other pictures?

I decide to modify it: I just crop it a bit, and run a new test. Here’s the result:

Now, it’s human.

What made it AI before? Something in the cropped part? The mistery remains but now, from my humble point of view, it’s more a mistery about how all these tools work, if they actually work. If I’ll find it out, I’ll be back soon.

NB: This is a first time, and for now I would say the ony time, I post something in English on my blog, which is usually in Italian. I apologize for my non-perfect English and in the same time I say “sorry” to my few Italian readers.

*For example: there’s a line departing from the doctor’s right hand forefinger, which draws a 90 degree angle and ends quite naturally in a bow, in the puppet photo. In the burnt body one, it seems cut at short distance from the body. And on the left of the body, there’s a trail on the towel, which is absent in the other photo. Israeli’s Prime Minister office, which released that picture, later blurred it. Maybe these strange traces are due to a blur removal? Difficult to say. Anyway, if the photo was modified to replace the body with the puppet, it must have happened before it was blurred.

Quite obviously, whatever one can think about that single picture, that’s only one picture and it certainly doesn’t modify the whole facts.